Chief Security Officer at BeyondTrust, overseeing the company’s security and governance for corporate and cloud-based solutions.

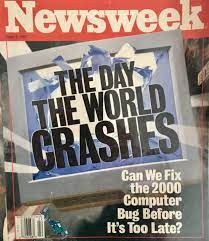

The year 2000 was a technology challenge for every company and software vendor. Software produced prior to the year 2000 typically only stored the year as a two-digit value to preserve memory. When a program stored a value of “98,” the program could not tell if the year was 1898, 1998 or even 2098. It assumed 1998 since that was the current century.

As we hit the new millennium in the year 2000, these programs were predicted to fail, and every company that produced their own software — and every software vendor — had to review their source code and fix every place a date entry code was used and stored in order to ensure it would work correctly.

That was 22 years ago and, unfortunately, a similar problem exists that is related to the original problem.

The next time you go online or fill out a form with a date, look to see if the year field allows for the complete year like 2021 or an abbreviation like “21.” If it is the latter, the software developers are creating new applications with the same flaw as Y2K. While this may sound like a trivial problem since we are about 80 years away from the next century, it will only get worse with each decade.

Take a look at an e-commerce site where you’re asked to enter your credit card information. The expiration date of almost every credit card is expressed as mm/yy. While some e-commerce sites do reference a four-digit year, others do not. The ones that do not, assume the prefix 20xx. This is hard-coded in the web application. While cards do not expire for longer than a few years, hard-coding 20xx represents a change that will need to occur in the future.

As we get closer to 2060 and 2070, the problem will begin to accelerate. We started using computers for record storage and processing in the 1960s and 1970s. While that is 50 years away, we have already demonstrated that we are embracing more and more technology in our lives and poor coding practices using only double-digit date codes will only exaggerate the problem if the software still exists, in some form, a few decades from now. If you consider COBOL applications are still being used by banks, much of that source code is 30 or even 40 years old. Realistically, we are not talking about a rogue meteor strike in 50 years that we need to prepare for, but rather a predictable event being created by our laziness.

As we consider this problem, it does expose another more serious Y2K-style issue. What do we do with all the data and applications already using faulty two-digit year codes? Do we proactively engage their developers and correct the problem? Do we ignore the issue and plan that the solution will be sunset long before the next century? Or, do we make a conscious effort to replace these applications in our environments to ensure that they do not become a problem in the future?

For programmers and application designers, consider the future of your application and what a minor difference in proper coding could represent. When not done properly, it could create another Y2K scenario years from now or create confusion in just a few decades as it confuses even birthdates of individuals born in the 1900s. One thing is for certain, Y2K happened years ago but we continue to make the same coding mistakes that created the problem in the first place.

Source: https://www.forbes.com/sites/forbestechcouncil/2022/01/11/why-the-y2k-problem-still-persists-in-software-development/?sh=6235ecd5ff7d